From Words to Numbers: Understanding Language Model Embeddings in Python

Introduction If you’ve ever wondered how computers, which fundamentally understand only numbers, can grasp the intricacies of human language filled with words carrying complex meanings, you’re not alone. In this article, we dive deep into the world o...

Introduction

If you’ve ever wondered how computers, which fundamentally understand only numbers, can grasp the intricacies of human language filled with words carrying complex meanings, you’re not alone. In this article, we dive deep into the world of language model embeddings and teach you how to utilize them in Python.

What is Word Embedding?

Word embedding is the representation of words in a dense numerical format that computers can use to understand them. The beauty of this approach lies in its ability to convert the nuanced connotations and relationships between words into numbers. A good embedding:

Capture similarities/differences: Good embeddings can accurately capture the relationships between words. For instance, “cat” and “kitten” might be closer in the embedding space than “cat” and “dog”. Capture features of the words: Beyond mere relationships, embeddings should also capture characteristics of the word itself, like whether it’s a noun or verb, or if it’s associated with positivity or negativity.

Overview of Embedding Models: Leading Open Source Solutions and API-based Offerings

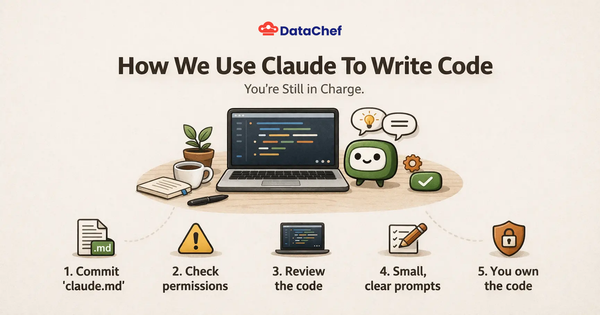

There’s an ocean of embedding models available today. The sentence transformers library, an extension of the HuggingFace Transformers library, collected many of them under one library. Based on their embedding leaderboard, one of the best-performing models currently is the all-mpnet-base-v2 model from Microsoft.

Regarding readily available API services, the OpenAI Embedding API and Cohere AI embedding API are top choices for developers. These allow for quick integration without the hassle of deploying your own model.

Prepare Your Python Environment

To dive into word embeddings, you’ll first need to set up your environment. The sentence transformers library is an excellent starting point. Install it using:

pip install sentence-transformers

But be cautious! This command will also install libraries like torch and transformers, which can be heavy and might take some time.

Example Word Embedding

Now, let’s use the example in the introduction to see the embeddings in action. We’ll use the all-mpnet-base-v2 model from Microsoft, which is available in the sentence transformers library. First, we’ll import the model and the SentenceTransformer class from the library:

from sentence_transformers import SentenceTransformer

model = SentenceTransformer('all-mpnet-base-v2')

Next, we’ll calculate the embeddings for the words “cat,” “kitten,” and “dog” using the encode() method:

# Get embeddings

cat_vector = model.encode(["cat"])[0]

kitten_vector = model.encode(["kitten"])[0]

dog_vector = model.encode(["dog"])[0]

Finally, we’ll calculate the cosine similarity between the vectors:

# calculate the cosine similarity between the vectors

cat_kitten_similarity = cosine_similarity([cat_vector], [kitten_vector])[0][0]

cat_dog_similarity = cosine_similarity([cat_vector], [dog_vector])[0][0]

dog_kitten_similarity = cosine_similarity([dog_vector], [kitten_vector])[0][0]

The output:

Similarity between cat and kitten: 0.7964548468589783

Similarity between cat and dog: 0.6081227660179138

Similarity between dog and kitten: 0.520395815372467

The results show that the model has captured the relationships between the words. The similarity between “cat” and “kitten” is higher than that between “cat” and “dog,” which is higher than that between “dog” and “kitten.”

What is Sentence embedding?

Moving a step ahead of word embeddings, we have sentence embeddings. While word embeddings represent individual words as vectors, sentence embeddings represent entire sentences. This is particularly useful when the meaning of a word depends on the context it’s used in.

Example Sentence Embedding

Language is intricate. Often, simply changing a word’s position can alter a sentence’s entire meaning. For instance, “I love dogs, not cats” carries a different sentiment from “I love cats, not dogs.” Sentence embeddings can capture these nuances.

Moreover, with the advent of multilingual embeddings, if the model is trained on multilingual texts, one can compare sentences across different languages, making translation and multilingual NLP tasks more efficient.

Conclusion

In conclusion, this post has provided a glimpse into the world of language model embeddings, highlighting their significance in understanding and representing words and sentences in a numerical format. From the foundational concepts of word and sentence embeddings to practical Python setups and real-world examples, we’ve walked through the essential aspects of this subject.

What is the fundamental purpose of word embedding?Word embeddings aim to represent words in a numerical format that computers can comprehend, enabling them to capture intricate word relationships and meanings.How do word embeddings capture word relationships?Good word embeddings can accurately capture word relationships by placing similar words closer together in the embedding space. For example, "cat" and "kitten" would be closer than "cat" and "dog."What is the significance of sentence embeddings?Sentence embeddings go beyond individual words, representing entire sentences as numerical vectors. They are essential for understanding context-dependent word meanings and enabling multilingual comparisons.Which Python library is recommended for exploring word embeddings?The "sentence-transformers" library is an excellent starting point for working with word embeddings in Python, offering practical tools and pre-trained models for various natural language processing tasks.