King Kong vs. Godzilla: Code Generator vs. Code Reviewer

There's a particular kind of engineering conversation I genuinely enjoy. Not the kind where someone brings a problem they already have the answer to and just wants you to nod along. I mean the kind where someone says something that makes you stop and reconsider what you thought you understood.

I had one of those recently with Farbod, a sharp engineer on a client project I know well. He mentioned, almost in passing, that it was becoming harder to keep up with pull requests in any meaningful way. Not just skim them. Actually review them. Understand what changed, why, whether it held together, and what it might break downstream.

I was genuinely surprised. I knew that team. It hadn't grown recently. So where was all this code coming from?

His answer was simple. The team had adopted AI coding tools.

That single sentence reframed the entire conversation for me.

The cost of creating code just dropped through the floor

For the past few years, we've watched AI systematically lower the cost of producing things. First text. Then images. Now code. Unlike earlier waves, this one hits at the center of how software teams operate.

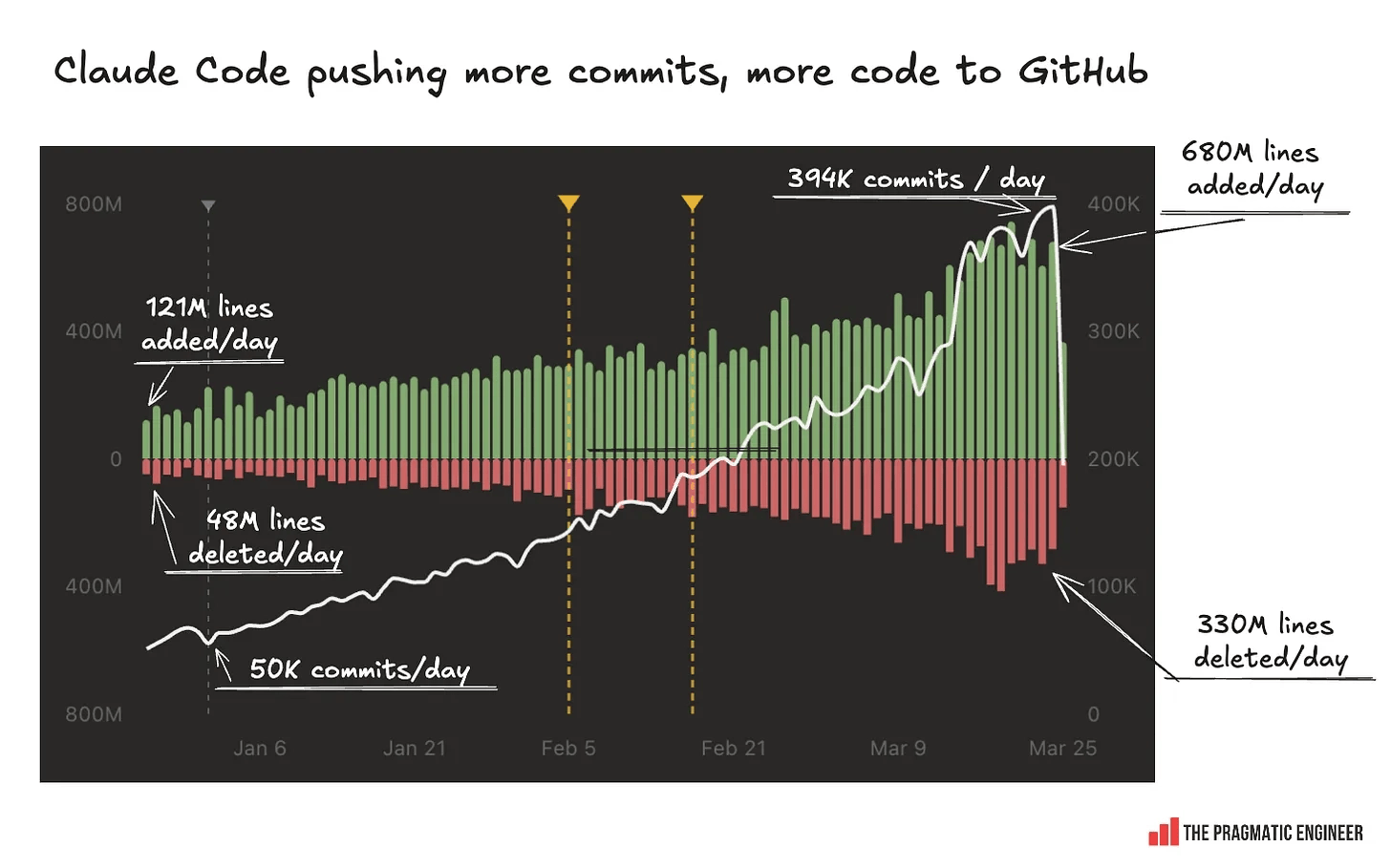

The numbers aren't subtle. GitHub reports that AI coding assistants now generate 46% of code written by developers on the platform, with Gartner projecting this will reach 60% of all new code by the end of 2026. 84% of developers now use AI tools daily. A GitHub study of 4,800 developers found tasks were completed 55% faster when using Copilot.

The production side of the equation is working exactly as advertised. Engineers are shipping more, faster, with smaller teams. It's genuinely impressive. It's also, as Farbod quietly pointed out, only half the story.

What the productivity benchmarks leave out

Measuring output is easy. You count commits, pull requests, features shipped. The metrics are right there on the dashboard. What's harder to measure, and therefore easier to ignore, is whether any of it is actually being understood by another human before it reaches production.

Code review isn't a formality. It's the moment where a second mind engages with what the first mind built. It's where assumptions get questioned, edge cases get caught, and institutional knowledge gets transferred. For many teams, it's the last meaningful quality gate before something goes live.

And that gate is now being asked to process dramatically more traffic than it was designed for.

By the end of 2025, monthly code pushes on GitHub crossed 82 million, with merged pull requests hitting 43 million and roughly 41% of new code AI-assisted.

Independent analysis found roughly 1.7 times more issues in AI-coauthored pull requests compared to code written without AI assistance. AI-generated code often looks convincing and perfectly formatted, which gives a false sense of correctness and security.

More volume. More risk per line. Same number of human reviewers.

There's a name for what's happening here

Eliyahu Goldratt wrote about this dynamic in manufacturing decades ago, in a book called The Goal. His insight was deceptively simple: in any system, there is always one constraint that limits the throughput of the whole. It doesn't matter how fast every other part of the pipeline runs. The constraint sets the ceiling. Everything else is just inventory piling up in front of it.

He called it the Theory of Constraints. His first instruction wasn't to fix the constraint immediately. It was to identify it clearly and then exploit it, get the maximum possible output from that bottleneck before doing anything else.

What Farbod was describing is a constraint most engineering teams haven't yet named: the code review queue. It was always a potential bottleneck, but for years it was kept in check by the natural limits of human code production. A developer can only write so much in a day. Review capacity roughly matched.

AI generation broke that equilibrium. The production side scaled. The review side did not. Your system can only move as fast as its slowest part. And right now, for a growing number of teams, the slowest part is the human trying to make sense of what the AI just wrote.

The uncomfortable truth is that many teams aren't actually shipping faster. They're generating faster. The code is sitting in review queues, half-understood, waiting. That's not throughput. That's inventory. Goldratt would recognize it immediately.

So what does exploiting this bottleneck actually look like?

The Theory of Constraints advises: identify the system's constraint, decide how to exploit it, subordinate everything else to that decision, then elevate it if needed. Applied here, that sequence is surprisingly practical.

Exploit first

Before adding people or tools, ask whether your reviewers are spending their attention on things only they can do. Are they catching architectural risks, questioning business logic, flagging security assumptions? Or are they also manually checking formatting, hunting for obvious bugs, and reading auto-generated summaries that could be produced automatically? If it's the latter, you're wasting capacity at the constraint before you've even started.

Then subordinate

Non-constraints should align their performance to support the constraint. In practice, this means rethinking how AI generation tools are configured. Smaller pull requests. Better commit discipline. Auto-generated context summaries that give the reviewer a head start. The generator should be making the reviewer's job easier, not harder.

Then elevate

This is where AI-assisted code review enters the picture, and it's now a serious, fast-growing category. Tools like CodeRabbit, Qodo, and Greptile have moved from novelty to core infrastructure, with adoption spanning companies from two-person startups to Fortune 500 enterprises. The code review automation market grew from $550 million to $4 billion in 2025 alone. These tools don't replace the human reviewer; they handle the mechanical layer so the human can focus on what genuinely requires judgment. Teams using AI-assisted review report a 40% reduction in review time and 62% fewer production bugs.

One important caveat

One note from Goldratt that gets forgotten: once you elevate the constraint successfully, it moves somewhere else. The bottleneck doesn't disappear, it shifts. That's actually the point. The work of a healthy engineering system isn't to eliminate all constraints. It's to keep finding them and keep raising the ceiling.

The question worth asking now

The teams winning at this aren't necessarily the ones generating the most code. They're the ones who noticed that velocity and quality run at different clock speeds, and decided to close the gap deliberately rather than hope it resolves on its own.

If your team has adopted AI generation tools (see how we adopted Claude at DataChef), the right question isn't whether that was a good idea. It probably was. The question is whether your review capacity scaled with your production capacity. Whether you've named the bottleneck. Whether you're exploiting it, or just watching inventory pile up on both sides of it.